You are here: Foswiki>Dmi Web>SummerSchool2019FacebooksAnatomy (24 Jul 2019, JedeVo)Edit Attach

Facebook’s Anatomy

Dissecting your favourite platform

Team Members

Can Ture, Carina Albrecht, Ektor Theoulakis, Giovanni Rossetti, Leonardo Sanna, Lucie Chateau, Mattia Lussana, Rosa Arroyo, Stefano Calzati, Xiaoyang Zhao, Giacomo Flaim, Alessia Musio Mercator Working Group: Bec Connolly, Daniel Bauer, Karina Meerman, Martin Lopatka DATACTIVE: Guillen Torres, Davide Beraldo, Jeroen de Vos (facilitator)Contents

Summary of Key Findings 1. Introduction 2. Initial Data Sets 3. Research Questions 4. Methodology 5. Findings and Discussion 6. Conclusion 7. ReferencesSummary of Key Findings

Facebook’s use of dark patterns and interface design is completely at odds with what they say in their data policy; that we, the users are in charge, of our data and our choices. What dark patterns do is to nudge and shape how we are going to share our data. Facebook presents “downloading data” as “everything we have on you”, even though the analysis of the additional data we have collected using other tools contradicts that. Studying Facebook to better understand how our data is used proves to be a hard task. Also, it seems designed to be that way. This project is a kick-start to understanding the nuances of Facebook’s data collection, and for developing tools and procedures that can help better analyze Facebook data.1. Introduction

Facebook is simply too big to ignore. As of 31st March 2019, there are over 2.38 billion monthly active users worldwide (Facebook Newsroom, 2019). Our global population is currently 7.6 billion (World Bank, 2019), therefore more than 30% of the world population are potential Facebook users. Facebook can exert immense influence over global access to news, political opinion and the spread of (mis)information (Lazer et al., 2018), and holds an enormous market share of online advertising (Wodinsky, 2019). Considering the vastness of Facebook’s reach, we should be more aware of their internal workings and policies. This is a single private company that has a huge impact on our social and cultural lives. Its lack of transparency and incredible sophistication deserves more scrutiny, as the company provides users with limited options to control what information the company collects, retains, and uses, including for targeted advertising, which appears to be on by default (Ranking Digital Rights, 2018). This project aimed to find out how exactly Facebook tries to get this information out of us, using two different approaches:- An analysis of its interface and use of “dark patterns”, through the eyes of a new user creating a Facebook profile for the first time;

- An analysis of the data that Facebook collects and allows us to access, such as through the “Download Your Information” functionality, and other points of data we can gather by other means, such as our local browser cookies and trackers.

2. Initial Data Sets

- Personal data files provided by the “Download Your Information” functionality on Facebook

- Facebook cookie files placed in our local browsers

- Facebook trackers, traced using Firefox Lightbeam add-on

3. Research Questions

- How Facebook collects user data?

- What it may be used for?

- How Facebook gets us to surrender that data?

4. Methodology

4.1 Dark Patterns Dark Patterns are defined as psychological loopholes that are exploited by designers in order to engage us (Brignull, 2019). These are powerful and persuasive techniques commonly found in the interface that lead us into revealing more information about ourselves than we intentionally set out to. The study aims to identify which dark patterns lead users to provide more data. We categorised the dark patterns present in creating a new Facebook profile and focused on patterns that corresponded to points for data input. In this way, we could correlate our findings to the data that Facebook allows us to gather and process. We also identified the interface elements and language used by the platform. To achieve this, we executed the following activities:- Literature review for first findings.

- Screenshotted new onboarding process:

- Categorised them according to dark patterns research (Brignull, 2019) into 3 categories; ‘Language and Framing’, ‘Presettings or Defaults’ and ‘Gamification’ that represented identifiable points for data input.

- Categorised interface elements according to text boxes, icon buttons and default buttons, as well as language used.

- Compared a new profile process to existing profile in terms of design and interface.

- Visualisation in gif format with colour coding according to 3 categories.

- Analysis of Facebook Data Policy.

- "Mature" profile: 7 of our team members provided their private Facebook data for our study purpose, which we used as a measure for comparison of what the data file from a “mature” Facebook account looks like versus the data from a fresh Facebook account. We compared their data collected before and after these users interacted further with Facebook during the week, to see if we could notice any significant changes.

- "Fresh" profile that interacted within Facebook and outside Facebook: We created 2 profiles for this purpose. Besides collecting their data before and after the interactions, we also observed and documented - verbally and visually - each of our interactions with Facebook, as well as the platform’s responses, including the process of creating new accounts. This information helped inform the "dark patterns" analysis of Facebook onboarding.

- "Fresh" profile with only interaction outside Facebook: to gather meaningful evidence of Facebook’s access to data we do not directly provide to them through the platform, we created 4 brand new Facebook profiles to track how web browsing outside of Facebook is reflected in the data collected by the platform. In order to make the study results comparable, each user followed the same instructional protocol for web browsing and then downloaded the information that Facebook collected. To do that, we have carried out the web browsing on a clean and newly installed application (Mozilla Firefox Browser Developer Edition), ensuring that the experiment is not confounded by the browsing history of the researchers.

5. Findings and Discussion

5.1 Dark Patterns- Language

- Discrepancies between how the interface and design of the onboarding process nudges us to share our data and how the language and phrasing of Facebook’s data policy puts the responsibility into our hands: “You can choose to provide information in your Facebook profile fields or Life Events about your religious views, political views, who you are "interested in," or your health. This is subject to special protections under EU law.”

- “You” vs. Facebook. “Facebook” or “us” is emphasised during the first four screens of the landing page when creating a new account, but disappears almost completely once the account is created. This is where “you” is emphasised, and where Facebook’s discourse of authenticity and empowerment comes into play. Zuckerberg famously said; “You have one identity. The days of you having a different image for your work friends or co-workers and for the other people you know are probably coming to an end pretty quickly (…). Having two identities for yourself is an example of a lack of integrity.” (van Dijck & Poell, 2013)

- Privacy: “You control how you share your stuff with people and apps on Facebook” . This language offers you the possibilities and gives you agency, therefore shrugging responsibility. This probably went under a major overhaul following public scrutiny of the platform following Cambridge Analytica, and Facebook now brands itself as a security and privacy-conscious platform, placing the privacy settings as the third priority in the profile building process. This increases trust in the platform and engagement.

- Discursivity and Interpellation: The landing page elaborates the motivations a user should have for joining Facebook. In the right-hand column, the benefit-driven text has sensory verbs front-loaded and bolded. Once arrived on the newsfeed, other elements such as status updates call out to the user. Though the function is called “create post”, “What’s on your mind” is significantly bigger. This interpellation creates a discursive relationship with the interface, giving it a voice. This call to action encourages the user to express personal beliefs and thoughts. “Press Enter to post” underneath the comment space introduces the new user to how commenting works- this is not a normal function on an existing account. The interfaces accompanies the new user discursively, subtly guiding the user and therefore giving the impression that Facebook will always be easy to use and intuitive.

- Sharing Profile Info and Bio is set to Public- even though it is emphasised in the profile set-up that you have control over this, and the “friends” option is selected in that screen rather than public.

- Default profile photo blends into the background due to colour choice. The natural response would be to want to stand out therefore by identifying yourself.

- In Advertising settings, preset selections are connoted by neutral colours which are more likely to be accepted (and reworked, which means appropriated) by the users. This specific shade of grey can also generate some confusion due to its similarity with the page background colour, in the sense that the user may be nudged to leave the options just as he has found them.

- “Next” button: is a constant natural call to action. Its colour stands out in the middle of the new, empty newsfeed.

- Progress Bar: gamifies the profile building process. Starts out at 4/9 despite not giving any information (neither friends, nor location nor profile photo)

- Profile Photo: The imagery of the “half-empty” bowl that needs to be filled is a call to action to upload a picture.

- Imagery: “Uploading a bio” or more information about yourself is accompanied with icons and imagery that are gamified- the chat bubble invites discussion and the star connotes a medal or an achievement to unlock.

- Did You Know?: This feature provides the opportunity to showcase yourself in a “unique” way. It also encourages a positive response, as Facebook itself has famously studied in their research on “mass-scale emotional contagion” that users are most likely to be affected by positive emotions on their newsfeed (Kramer et al., 2014). Purple has ‘dynamic’, ‘exciting’ and ‘playful’ connotations which urge users to engage with it. (Hynes, 2009) All of these factors together create an affective sweetener to the concept of introducing the self and creating a profile. The answer to the question is framed in a celebratory picture, balloons representing an occasion to celebrate the fact that our research persona Katharina partook in this function and divulged more information.

Figure 1: onboarding illustration of dark patterns

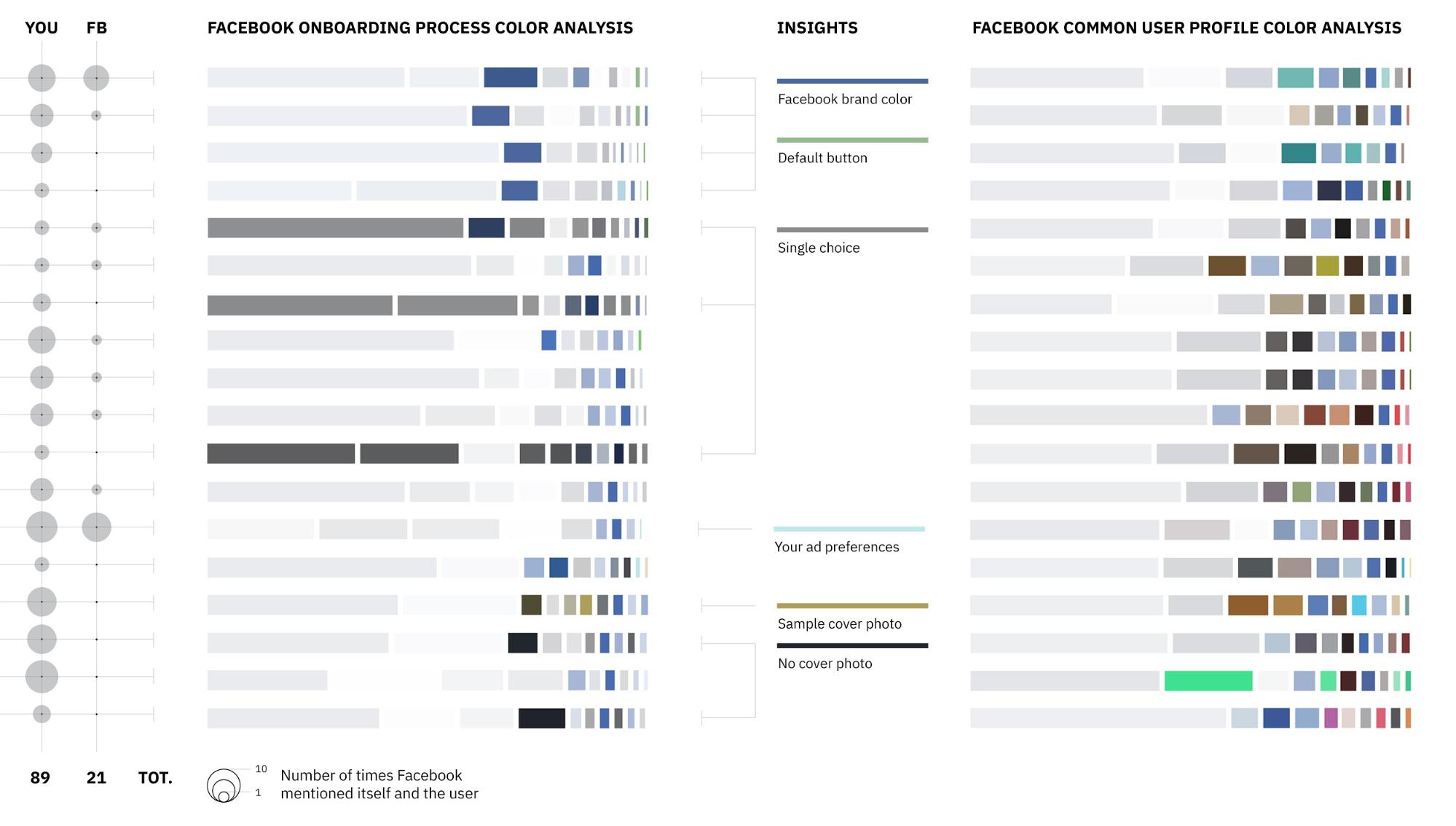

A comparison between the language used and the colour scheme of a “fresh” Facebook profile vs. a common user profile is illustrated in Figure 2:

Figure 1: onboarding illustration of dark patterns

A comparison between the language used and the colour scheme of a “fresh” Facebook profile vs. a common user profile is illustrated in Figure 2:

Figure 2: language and colour scheme used in a Fresh vs. a mature Facebook profile

5.2 Data analysis

Figure 2: language and colour scheme used in a Fresh vs. a mature Facebook profile

5.2 Data analysis

- It is hard to create artificial personas on Facebook for research purposes, as two of the newly created accounts were blocked for review. Users were asked to provide valid phone numbers or profile pictures to verify their identities. There were two possible reasons for this: first, all testers used the same IP address; second, AI-generated photos were applied to Facebook profile pictures. In short, two of the accounts remained active only for one day, during which we were able to do browsing outside Facebook, as well as interact with some features of the platform - i.e., liking pages, joining groups and sending messages.

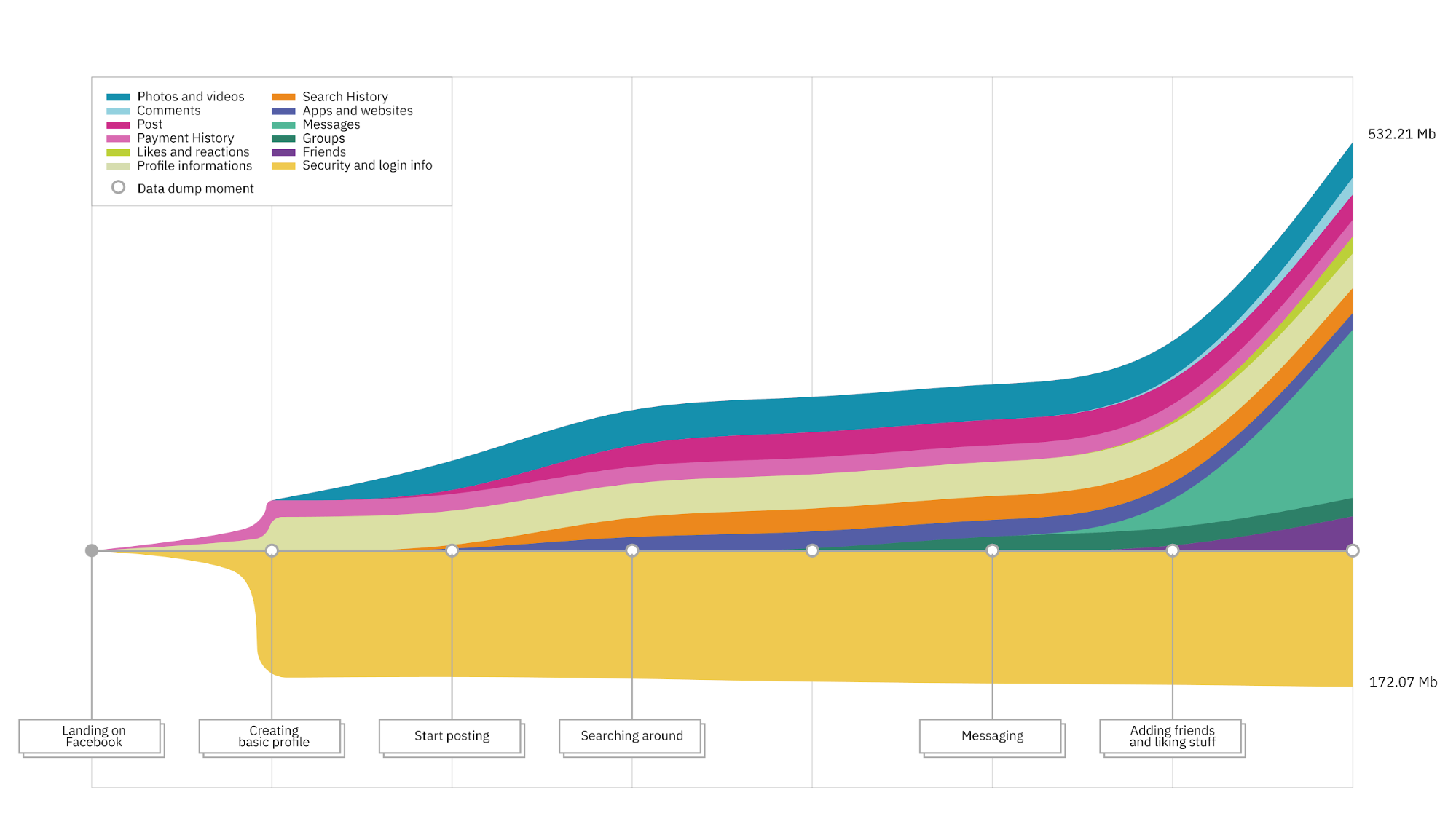

- Analyzing and comparing many downloaded files from Facebook is not simple. The folder structure that organizes these files are specific to Facebook, this is why we needed a specific data parser for this type of data structure. Collecting the downloaded files in a systematic way that would be useful for visualization was also challenging, as at the end of the data collection we had a hard time correlating the actions taken in Facebook to the data extracts we had. This issue revealed the need for a better-structured approach of clearly documenting the actions that correlate to each data file extract. The original plan was to generate a visualization of the "life of data" that included all the data we have collected, from all profiles, but in the end we could only practically generate a visualization of one of the "fresh" profiles (see Figure 3).

Figure 3: the “life of data” of a fresh Facebook profile

Figure 3: the “life of data” of a fresh Facebook profile

- We observed a growth of the cookie files as we interacted with Facebook and while following the browsing protocol. The cookie analysis revealed that the file size of the Facebook cookie constantly grew as we implemented the browsing protocol. Browsing caused some 10-20% increase in the cookie size, implying that Facebook collects information from websites Internet users visit outside the platform in separate tabs.

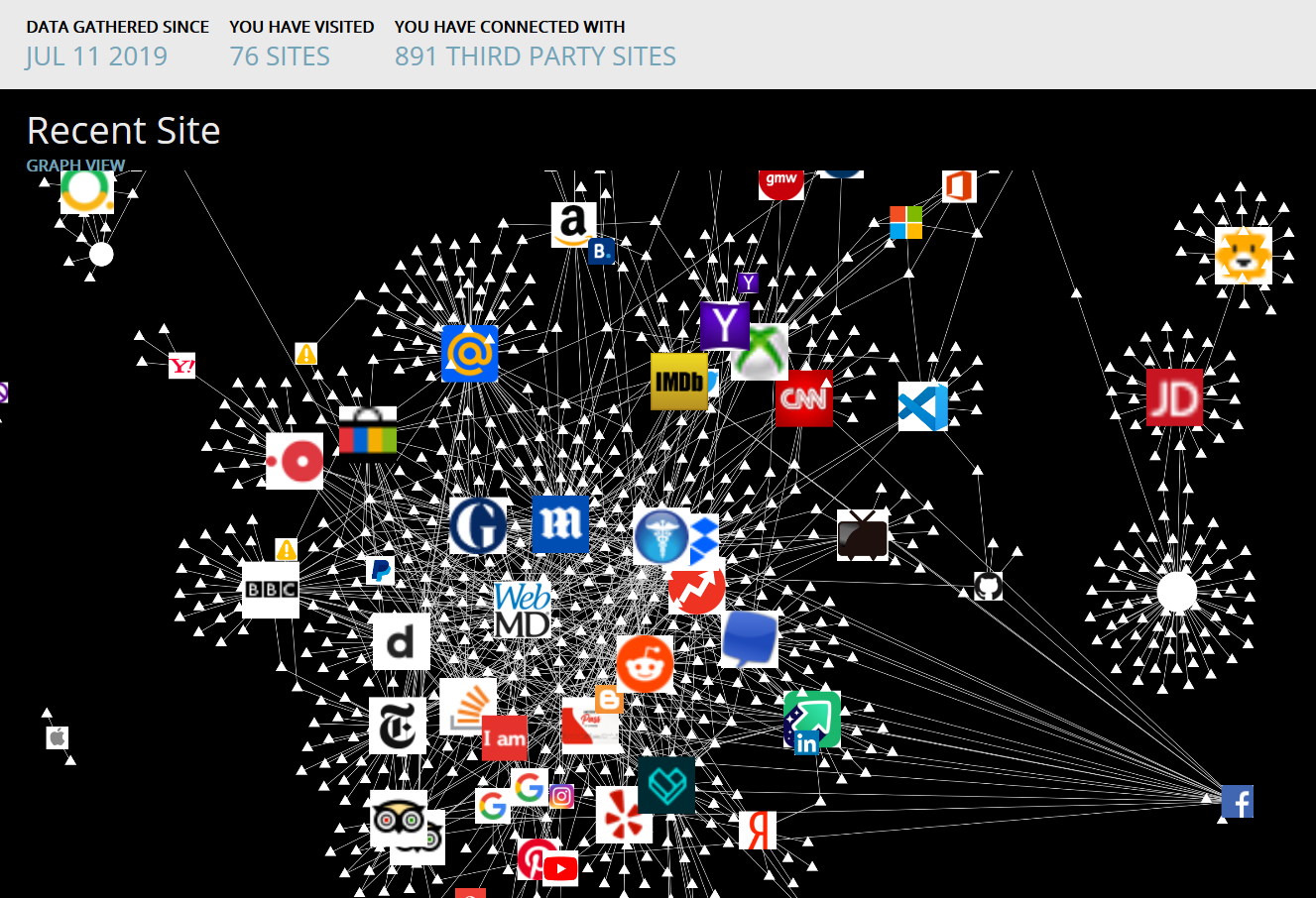

- In order to reveal the accumulation of third-party sites linked to Facebook, we have tested the variance between pre- and post-browsing numbers of tracker connections to Facebook. Following the off-Facebook browsing protocol, the number of tracker connections from external websites to Facebook rose from 0 to 21, suggesting that Facebook is collecting browsing information as the users visit non-Facebook websites (see Figure 4).

6. Conclusion

What is Facebook’s definition of “your information”? At the end of this project, we proposed this new question. We have observed that a lot of data about us is being collected by Facebook, such as information they collect during our browsing activities. Nevertheless, they do not provide us with a transparent way of looking into this data. Facebook's language gives us a false sense of empowerment in order to keep us engaged with the platform. Eventually, what we see in Facebook’s use of dark pattern and interface design is completely at odds with what they say in their data policy; that we, the users are in charge, of our data and our choices. What dark patterns do is nudge and shape how we are going to share our data. One of the things that feels insincere is that Facebook presents the downloading of the data as “everything we have on you”, even though the analysis of the additional data we have collected contradicts that. Future studies might be able to develop systematic ways for collecting and observing the process of data collection, so that we can properly answer this question. We had the hypothesis that a few prompts for user data would balloon into lots of inferred data for Facebook. In our mockups that was the case, but a few days of collecting data and running the tests with the parser was not sufficient to generate enough data for comparison and good visualization. The task of reconstructing the evolution of explicit and inferred data that Facebook has on us requires more activity time and a more structured data collection procedure, but we designed an initial protocol and tool that could be used as a starting point for future research projects.7. References

Brignull, H. (2019). Dark Patterns. Retrieved from: https://www.darkpatterns.org/types-of-dark-pattern Cialdini R. (2007). Influence: The Psychology of Persuasion. Collins Business Essentials Edition. Facebook Newsroom. (2019). Company Info. Retrieved from: https://newsroom.fb.com/company-info/ Ghose, T. (2015, Jan 27).What Facebook Addiction Looks Like in the Brain. LiveScience. Retrieved from: https://www.livescience.com/49585-facebook-addiction-viewed-brain.html Gilroy-Ware, M. (2017). Filling the Void: Emotion, Capitalism and Social Media. Repeater Eds. pp. 244. Hynes, N. (2009). Colour and meaning in corporate logos: An empirical study. Journal of Brand Management 16 (545), 545-555. DOI: https://doi.org/10.1057/bm.2008.5 Kramer, A. D. I., Guillory, J. E. & Hancock, J. T. (2014). Experimental evidence of massive-scale emotional contagion through social networks. PNAS, 111 (24). 8788-8790. DOI: https://doi.org/10.1073/pnas.1320040111 Lazer, D. M. J., Baum, M. A., Benkler, Y., Berinsky, A. J., Greenhill, K. M., Menczer, F., ...Zittrain, J. L. (2018). The science of fake news. Science, 559, 1094-1096. DOI: 10.1126/science.aao2998 Ranking Digital Rights. (2018). Facebook, Inc. Retrieved from: https://rankingdigitalrights.org/index2018/companies/facebook/ Schrems (2012). Legal Procedure against “Facebook Ireland Limited”. Retrieved from: http://europe-v-facebook.org/EN/Complaints/complaints.html van Dijck, J., & Poell, T. (2013). Understanding Social Media Logic. Media and Communication, 1(1), 2-14. DOI: 10.12924/mac2013.01010002 Wodinsky, S. (2019, July 17). Big Tech Claims It’s Not Choking Competitors, but Data Suggests Otherwise. Adweek. Retrieved from: https://www.adweek.com/digital/big-tech-claims-its-not-choking-competitors-but-data-suggests-otherwise/ World Bank. (2019). Data, Population, total. Retrieved from: https://data.worldbank.org/indicator/sp.pop.totl?end=2018&start=2014 -- JedeVo - 24 Jul 2019| I | Attachment | Action | Size | Date | Who | Comment |

|---|---|---|---|---|---|---|

| |

image1.gif | manage | 31 MB | 24 Jul 2019 - 12:25 | JedeVo | |

| |

image2.png | manage | 232 K | 24 Jul 2019 - 12:28 | JedeVo | |

| |

image3.png | manage | 532 K | 24 Jul 2019 - 13:12 | JedeVo | |

| |

image4.png | manage | 184 K | 24 Jul 2019 - 13:11 | JedeVo | |

| |

image5.jpg | manage | 141 K | 24 Jul 2019 - 12:45 | JedeVo |

Edit | Attach | Print version | History: r1 | Backlinks | View wiki text | Edit wiki text | More topic actions

Topic revision: r1 - 24 Jul 2019, JedeVo

Copyright © by the contributing authors. All material on this collaboration platform is the property of the contributing authors.

Copyright © by the contributing authors. All material on this collaboration platform is the property of the contributing authors. Ideas, requests, problems regarding Foswiki? Send feedback